Hey kids, come here - grampa is telling a story from the war... ;)

I'm currently hunting a bunch of race conditions in a rather large C/C++ code base. Some of them manifest quite visibly as heap memory corruptions (the reason I'm searching), others are subtle and just visible in one-in-a-thousand slightly off output values.

Now ten years ago, when you were facing such bugs, probably all you could do was to read all thet code, verify the locking structure and look out for racyness. But things have changed substantially. We have valgrind.

Valgrind is a binary code instrumentation framework and a collection of tools on top of that. It will take your binary and instrument loads, stores and a bunch of important C library functions (memory management, thread synchronization, ...). It can then perform various checks on your running code. So it belongs in the category of dynamic analysis tools.

Luckily the buggy code also runs on linux where valgrind is available so I went on to try it. And boy did it find subtle bugs...

There are two different tools available for race conditions: helgrind and drd. I'm not yet 100% sure how both of them work but as far as I understand, they are tracking memory loads and stores together with synchronization primitives and build a runs-before graph of code segments. When code segments access shared memory in a conflicting way (reader/writer problem) without having a strong runs-before relationsship these are race conditions and will be reported.

Sadly I'm also finding real races in well known open source libraries that are supposed to be thread safe and work correctly ... seems they do not. This tells me, that the developers are not using all the tools available to check their code.

And this is my message: If you are writing an open source library or application that only remotly has something to do with threads, save yourself and your users a lot of trouble and use valgrind!

When your security measures go too far, your users will work around it.

(seen at a train station in Berlin)

My guess here is, that the person who was planning the locking system just forgot the break room of the train drivers or did not update the locking system when it was moved behind this door.

For various reasons I've been studying all kinds of authentication protocols, lately. A good authentication protocol should, for my purposes, fulfill the following properties:

- It does not disclose the secret to an eavesdropper over the wire.

- It does not require the server to store the secret.

- It is reasonably simple to implement.

As always, it seems to be a case of "choose two".

A little surprise for me was CRAM-MD5. Of course MD5 should not be used anymore, but CRAM-MD5 can trivially be changed into a CRAM-SHA256 just by replacing the underlying hash function. So let's keep the weak hash function out for the purpose of the discussion.

The point is, CRAM-MD5 fulfills 1. and 3. but NOT 2. This often is not immediately obvious and users and server administrators might not be aware of it. E.g. when you look at the native user database of a CRAM-MD5 enabled dovecot server, you will see something like this:

username:{CRAM-MD5}652f707b0195b2730073e116652e22f20125ec6413a957773b65ebe33d7b3ad0:1001:1001::/home/username

This looks like a hash of the password you use to authenticate to the dovecot server.

But CRAM-MD5 is more or less just an alias for a classic challenge-response protocol based on HMAC-MD5.

In a classic HMAC based authentication protocol the server sends the client a

random nonce value and the client is supposed to respond with HMAC(secret, nonce).

Then the server must also calculate HMAC(secret, nonce) on his side and compare the clients

response with the expected result. If they match, he can know that the client also knew the secret.

As you can already see, the server MUST know the secret (unless there is some magical trick somewhere). So I looked into the relevant RFC-2159 for the CRAM protocol and RFC-2104 for the HMAC details. And deep in there in section "4. Implementation Note" in RFC-2104 you will find the answer.

Let's look at it in detail. First we need the definition of the HMAC function.

HMAC(secret, nonce) =

H( secret ^ opad,

H( secret ^ ipad, nonce )

)

Where H(m1, m2, ...) is a cryptographic hash function applied on it's concatenated arguments.

opad and ipad are padding values that depend on the length of secret. ^ is a bitwise XOR.

Now most hash functions can also be implemented with an additional initialization vector (IV) argument which

contains the hash functions last internal state.

With such an IV, a previous hash calculation can be continued at the place where it was stopped.

If you only store the IVs for H( secret ^ opad ) and H( secret ^ ipad ) you can still calculate the complete

HMAC while not storing the secret in plain.

HMAC(secret, nonce) =

H( IV_opad,

H( IV_ipad, nonce )

)

Turns out this is exactly what dovecot and probably any other sane CRAM-MD5 implementation does and has to do.

While this protects the original secret (your password) to some degree, the stored IVs are exactly as powerfull as the secret. If an attacker manages to get the stored CRAM-MD5 IV he can use it to log in into any other account that supports CRAM-MD5 and uses the same password.

That means, unlike crypt or bcrypt hashed password databases, a database with CRAM-MD5 IVs must be kept secret from any non-authorized user.

So while the CRAM-MD5 challenge-response authentication looks like beeing more secure than a plaintext authentication, it actually depends on the attacker model. You might be better off with just plaintext authentication over SSL (and a proper bcrypt password-hash database).

(via bassdrive) some DNB for coding sessions:

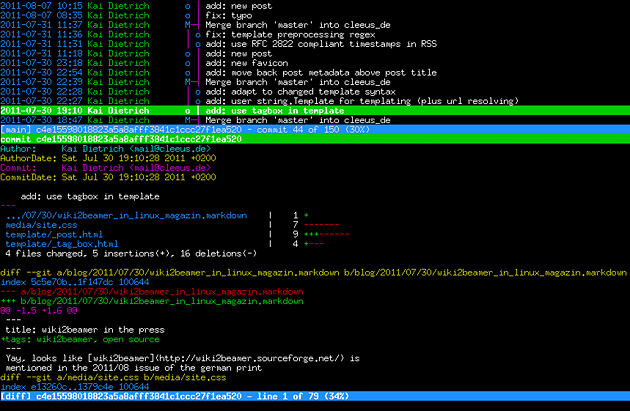

Once again, I found a valuable tool for the shell that can replace a much more complex gui tool: tig. Tig can replace gitk and doesn't need X11. So if you need/want to work on an environment where gui is not trivial (X11 on cygwin anyone? Or maybe just ssh on a build VM?), but need some more overview over your git repository, tig may help. Others have written about it before, so I will just provide a screenshot: